From Hormuz to HUMAIN

How grid constraints are redirecting the $650B AI infrastructure boom

The next constraint on artificial intelligence may not be chips.

It may be electricity — and the geopolitics of the power grid.

MacroMashup Briefing

What happened

Artificial intelligence infrastructure is expanding at extraordinary speed.

Hyperscale technology companies are dramatically increasing capital expenditures to build the next generation of AI data centers and compute infrastructure.

But beneath this expansion lies a slower system.

The power grid.

Electricity generation, transmission, and fuel supply systems evolve far more slowly than software infrastructure.

Why it matters

If AI infrastructure continues expanding at its current pace, electricity systems may become one of the primary constraints shaping where and how quickly artificial intelligence can scale.

The next bottleneck in the AI economy may not come from algorithms or semiconductors.

It may come from electricity.

What to watch

Three signals will determine how this dynamic evolves:

• hyperscale capital expenditure trends

• regional grid congestion and interconnection queues

• geographic migration of AI infrastructure investment

Artificial intelligence is often framed as a software revolution.

Faster models.

More powerful GPUs.

Larger training clusters.

But beneath that digital layer lies a massive physical system.

Electricity.

Every AI query.

Every training run.

Every hyperscale data center.

All of it ultimately runs on power.

And the uncomfortable reality now emerging is this:

The next constraint on artificial intelligence may not come from algorithms or semiconductors. It may come from the power grid.

This Week’s Macro

This week the tension between silicon and steel stopped being a thesis and became a price. Iranian strikes on Qatari LNG facilities and Saudi energy infrastructure pushed Brent crude past $113 — up nearly 30% from a week ago — while the Hormuz corridor moved from “effectively closed by behavior” to functionally shut, with tanker traffic at near-zero through the strait.

The same region positioning itself as the overflow destination for AI training load is now the epicenter of a live supply shock.

The Federal Reserve walked into that backdrop and delivered exactly the hold the market expected — rates unchanged at 3.50–3.75%, the third consecutive meeting on pause after three cuts in the final quarter of 2025 — but the message underneath was harder than the headline.

The updated dot plot still shows a median of one cut for 2026, but seven of nineteen members now see zero cuts this year, up from six in December. The Summary of Economic Projections revised PCE inflation to 2.7% for both headline and core, a meaningful upward shift from December’s 2.4% and 2.5% respectively.

Powell was blunt: the committee needs “clear progress” on inflation before easing, and the energy shock has pushed that progress further out.

Markets heard it. Equities breached their 200-day moving averages for the first time since May — the S&P closed at 6,624, the Dow posted a new year-to-date low at 46,225, and losses accelerated into the close.

But the sharpest move was in precious metals: gold crashed below $5,000 to trade near $4,589, an 11.5% drawdown from last week’s level, as the hawkish hold and surging DXY made non-yielding assets suddenly expensive to hold.

The flight-to-safety bid went to the dollar, not metals. HY credit spreads widened another 60 basis points to 370, the widest since late 2025.

For AI investors, this is not a sidebar. When the Fed says “energy-related uncertainty,” it is talking about the forward cost curve for the compute infrastructure the market has been pricing as secular and inevitable.

This week’s macro is not a distraction from the AI story. It is the operating environment for it.

Artificial intelligence is often framed as a software revolution.

Faster models.

More powerful GPUs.

Larger training clusters.

But beneath that digital layer lies a massive physical system.

Electricity.

Every AI query.

Every training run.

Every hyperscale data center.

All of it ultimately runs on power.

And the uncomfortable reality now emerging is this:

The next constraint on artificial intelligence may not come from algorithms or semiconductors. It may come from the power grid.

The Physical Infrastructure Behind AI

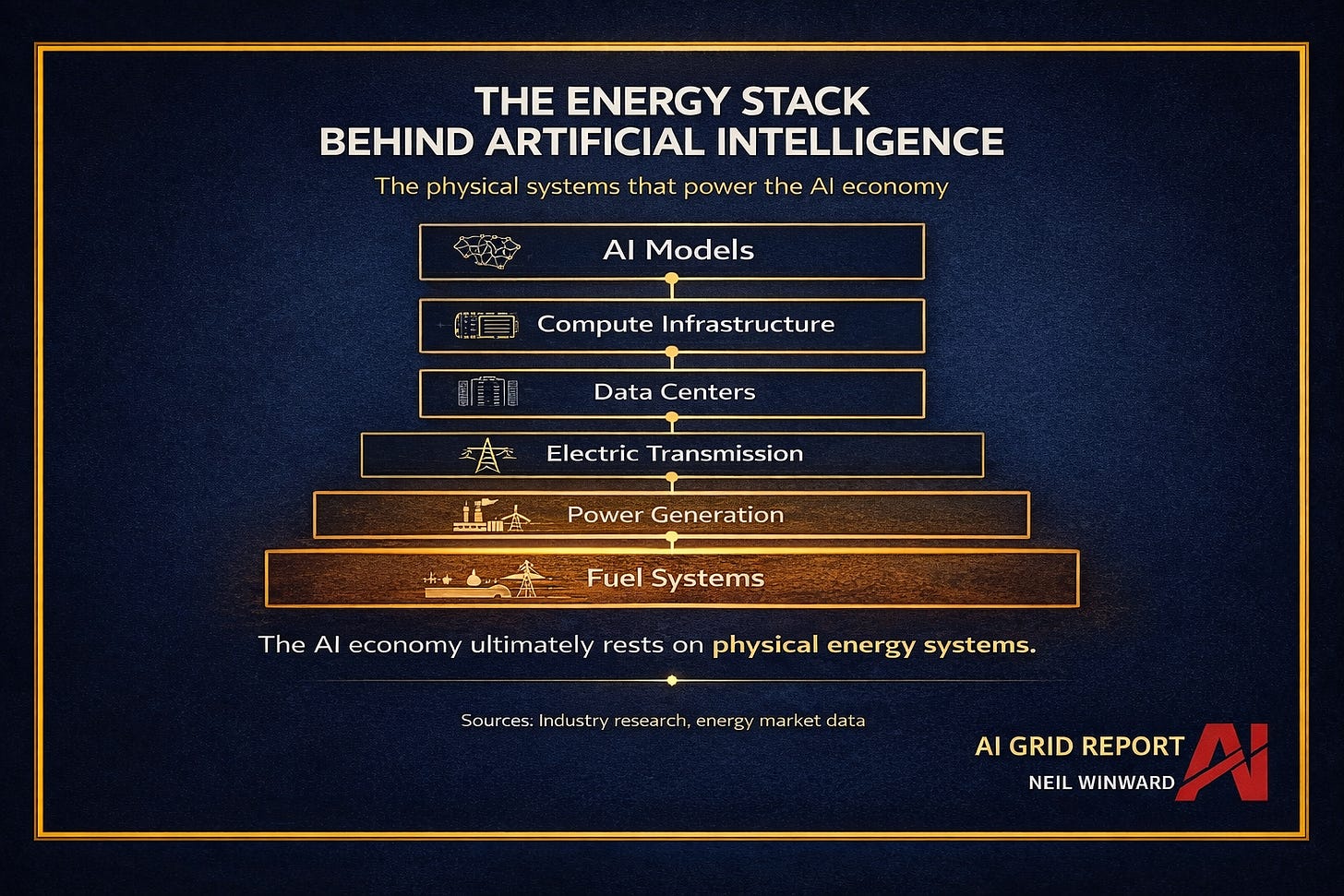

Artificial intelligence sits on top of a layered infrastructure stack.

At the top are the models themselves.

But those models depend on compute clusters, which depend on data centers, which depend on electricity networks, which ultimately depend on fuel systems.

Each layer depends on the ones beneath it.

And while AI innovation operates on startup timelines, the infrastructure layers below it evolve much more slowly.

Transmission systems take years to expand.

Power plants take years to permit and construct.

Fuel supply systems require long-term capital investment.

This creates a structural mismatch.

Artificial intelligence moves at the speed of software.

Energy systems move at the speed of infrastructure.

This gap is beginning to create what we might call the AI Energy Bottleneck.

The Electricity Shock

For nearly two decades, electricity demand growth in many advanced economies remained relatively flat.

Efficiency improvements offset much of the growth from digital technologies.

Artificial intelligence may reverse that trend.

Training large models and operating hyperscale AI data centers requires enormous amounts of electricity.

Large AI campuses are now approaching gigawatt-scale power demand.

That is roughly equivalent to the electricity consumption of a mid-sized city.

The Next AI Bottleneck

For years the conversation around artificial intelligence has focused on semiconductors.

GPU shortages.

Chip supply chains.

Manufacturing capacity.

But the next constraint may appear somewhere very different.

Not in fabs.

In power plants.

Because every training cluster, every inference engine, and every hyperscale data center ultimately runs on electricity.

Electricity infrastructure does not scale at the speed of software.

The AI boom is beginning to collide with something much older.

The power grid.

Where the Constraint Appears

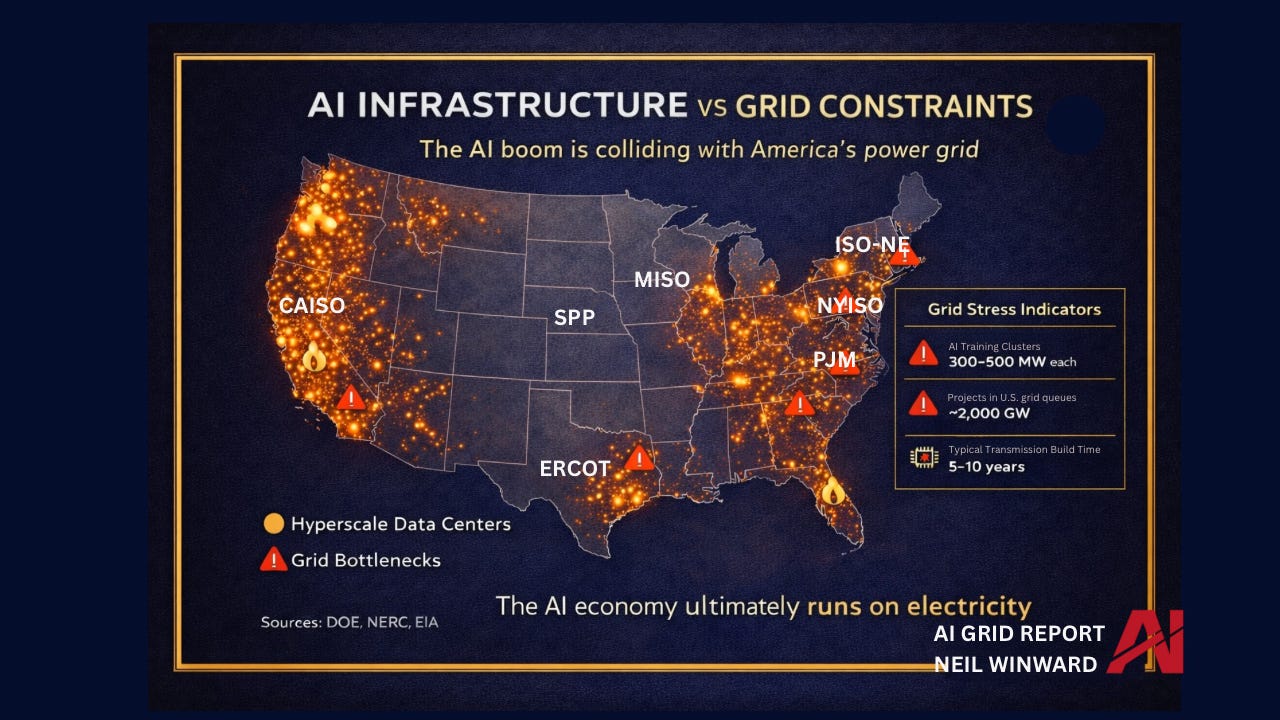

Electricity infrastructure is not evenly distributed.

And neither is AI infrastructure.

Hyperscale data centers tend to cluster in regions where several conditions intersect:

• fiber connectivity

• land availability

• regulatory stability

• existing power infrastructure

But many of those same regions are already experiencing grid congestion.

Several major U.S. power markets — including PJM, ERCOT, CAISO, and ISO-NE — are already facing significant transmission constraints or interconnection backlogs.

Adding gigawatt-scale AI campuses into those systems introduces a new layer of pressure.

Unlike software deployments, electricity infrastructure cannot scale overnight.

Transmission projects require permitting.

Power plants require financing and regulatory approval.

Interconnection queues often stretch for years.

The Constraint Ladder

When infrastructure systems scale unevenly, constraints appear in layers.

Artificial intelligence sits at the top of the stack.

But the layers beneath it determine how quickly the system can expand.

Compute clusters require data centers.

Data centers require electricity.

Electricity requires generation capacity, transmission networks, and fuel supply.

The slowest layer in that system ultimately determines the speed of the entire stack.

Where Capital Is Going

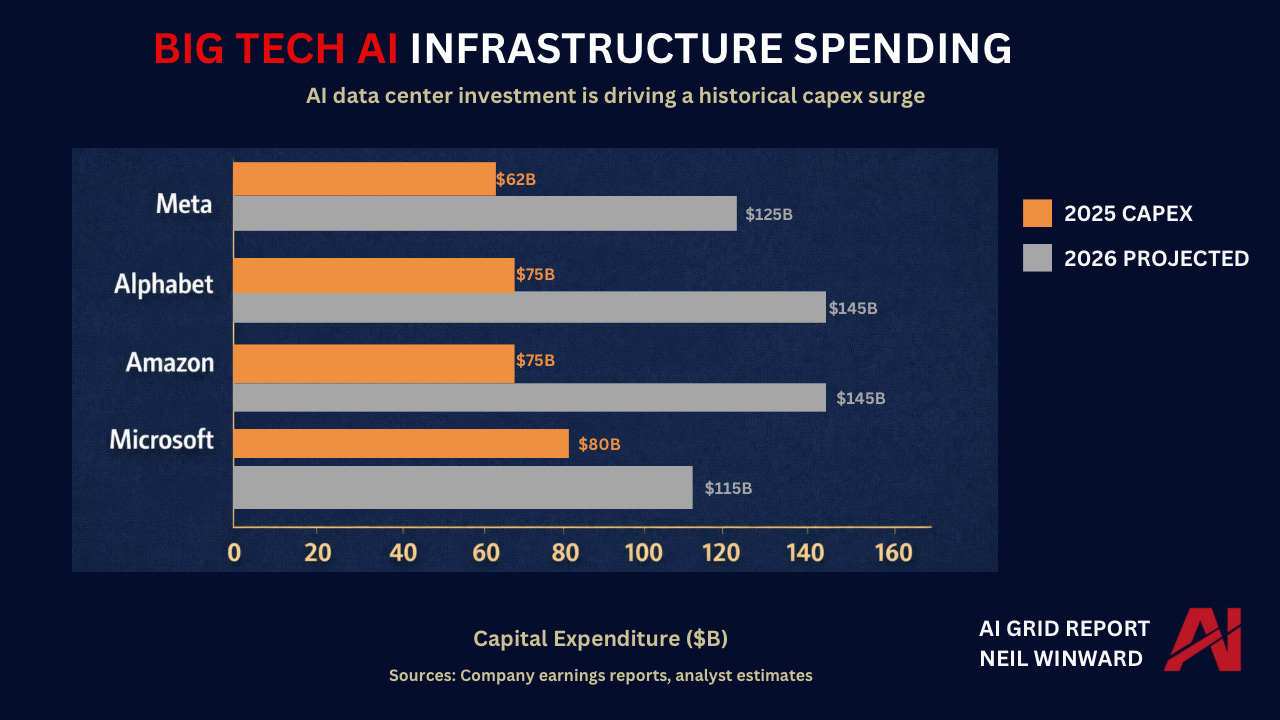

Artificial intelligence infrastructure requires enormous capital investment.

The scale of spending now underway is unprecedented in the technology sector.

Major hyperscale companies are dramatically increasing capital expenditures to build AI infrastructure.

Microsoft, Alphabet, Amazon, and Meta alone are projected to spend hundreds of billions of dollars annually on data centers, chips, and related infrastructure.

The combined capital expenditure surge across the sector is approaching $650 billion annually.

But capital can move faster than physical infrastructure.

Which raises a critical question:

What happens when investment expands faster than the energy systems needed to support it?

To answer that question, we need to look beyond technology companies and examine the physical infrastructure that powers the AI economy.

The answer begins to appear in places most AI discussions rarely examine.